Bringing AI-assisted creation into Kokai

Contextual targeting on TTD relies on custom categories

Advertisers use custom categories - collections of chosen keywords - to target ad placements against specific content. Today, custom categories are built through exact or token matching, which means an advertiser typing "apple" gets results for both Apple the fruit and Apple the technology company, with no way for the system to disambiguate. Advertisers with specific contextual needs end up either over-broadening their targeting or building exhaustive keyword lists manually.

This matters more now than it used to. Contextual targeting was historically a blocking tool - more often than not, applied retroactively to keep ads away from unsafe content. As the industry shifts away from third-party identifiers, contextual is becoming a preemptive targeting strategy in its own right. Custom categories that can't disambiguate intent are a real ceiling on that growth.

The first AI-powered workflow in Kokai

Since this is the first AI-powered workflow in Kokai, a lot more is at stake than the feature itself. We're setting precedent for how AI shows up across the platform both visually, in iconography, and in language. Decisions made here will be inherited by every AI workflow that follows.

The first version

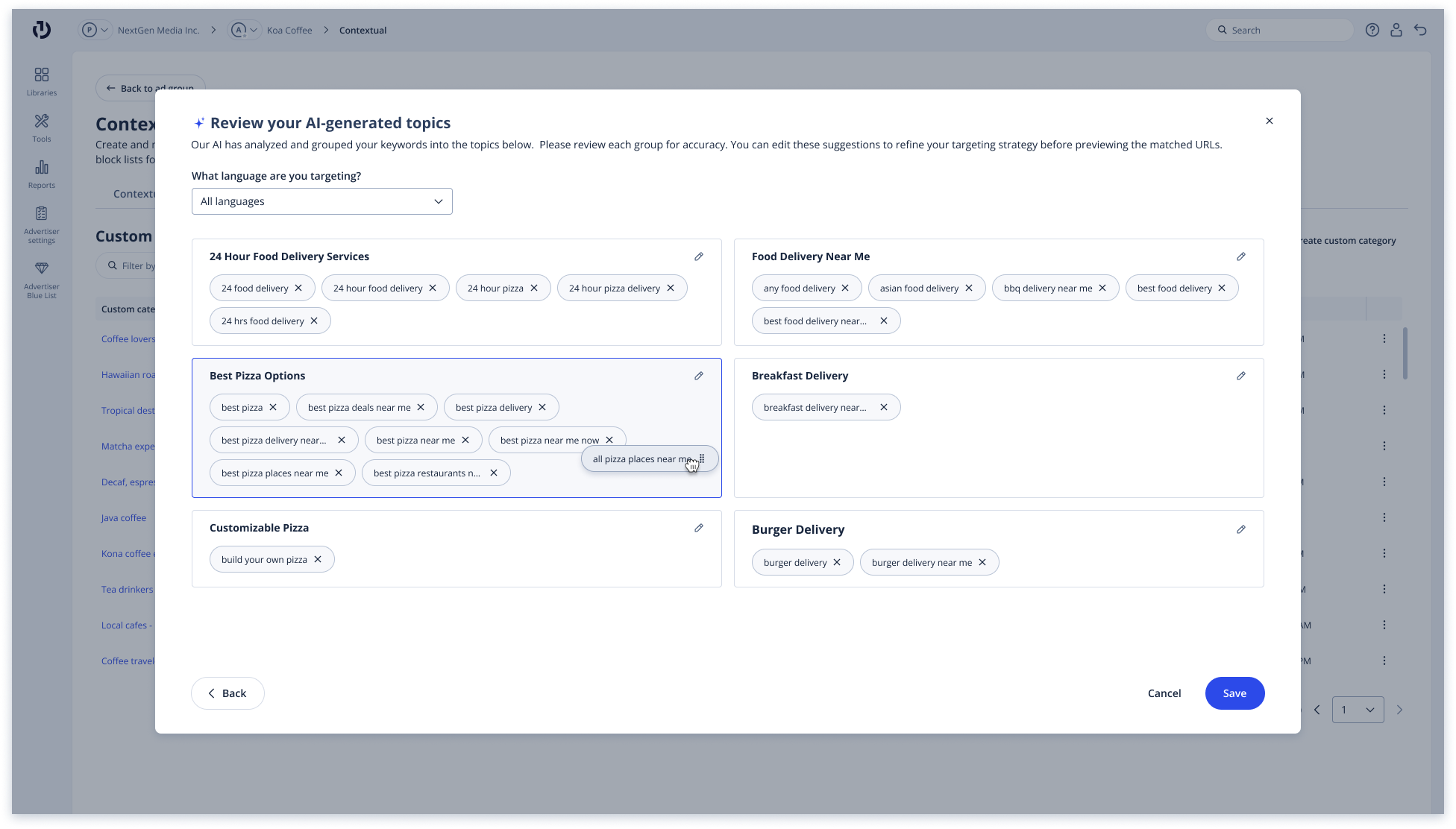

The data team came in with a working prototype of the flow they envisioned: (1) users enter keywords, (2) the LLM returns categorized results that users can recategorize and relabel, (3) the system shows a preview of the content their ads will appear alongside. To help me understand the requirements from their end, I quickly mocked up how the flow would appear in the Kokai UI (Step 2 shown to the right), but as I studied it more closely, I realized that the recategorization step served to better train our model rather than to provide any direct user benefit.

V1 - The data team's proposal executed in design: users are asked to recategorize the LLM's outputs

V1 - The data team's proposal executed in design: users are asked to recategorize the LLM's outputs

The alternative

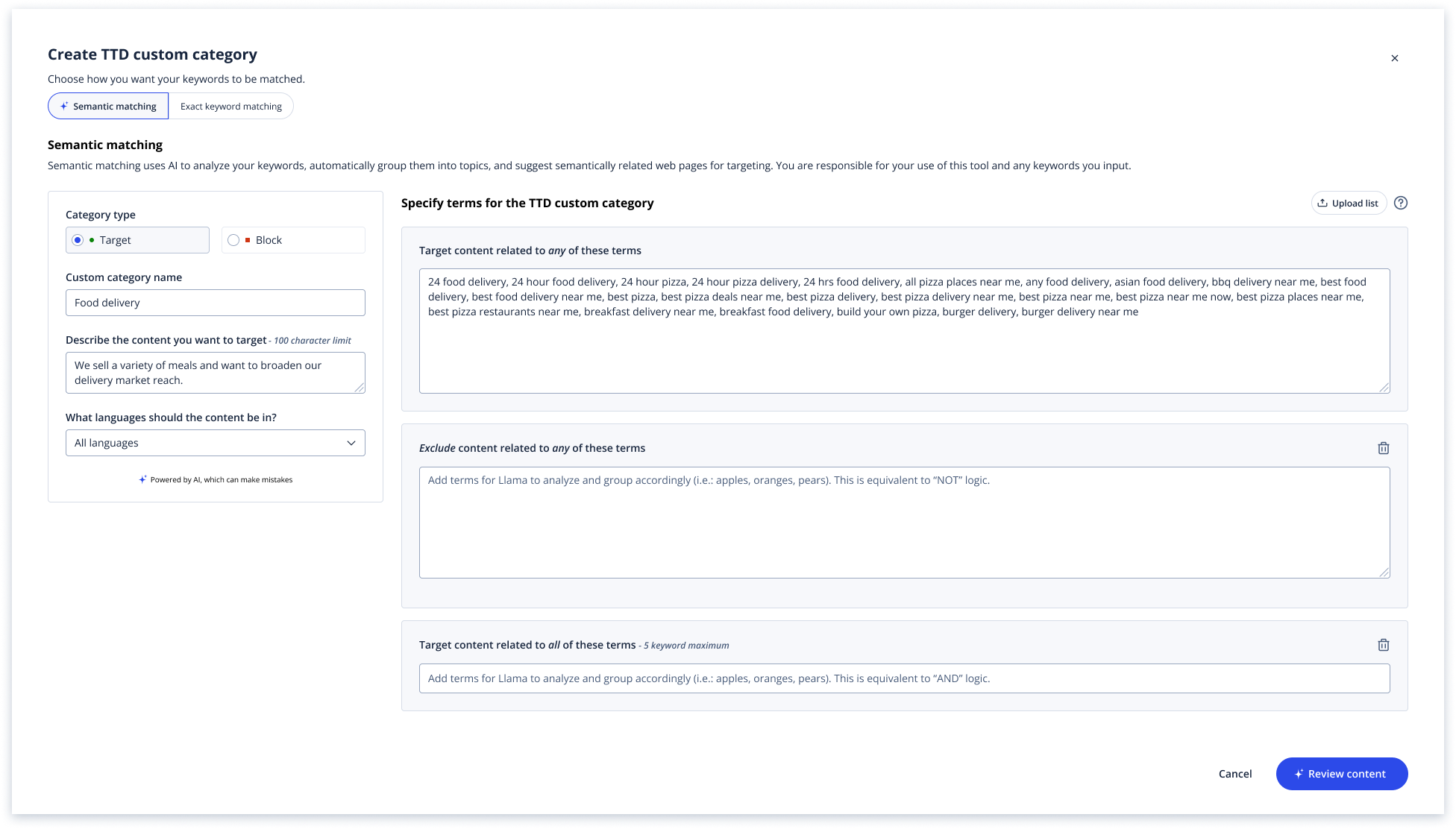

I brought this back to the team and proposed an alternative: rather than asking users to clean up the model's outputs after the fact, give the model better inputs upfront. Specifically, ask users to provide a short business statement alongside their keywords, so the LLM can infer intent (i.e.: Apple the company vs. Apple the fruit) before generating results. This shortened the user flow significantly and presented users with more accurate results right away.

AI workflows present a risk of designing user steps around model needs (more training data, fewer hallucinations, easier validation) rather than user needs. Identifying these journeys and reframing the flow to prioritize user needs feels like a core competency for designing AI products.

V2 - My reframed design: requiring a business goal upfront to help the LLM infer intent before generating results

V2 - My reframed design: requiring a business goal upfront to help the LLM infer intent before generating results

A parallel exploration

In parallel to these efforts, I also proposed a chat-based experience that lets advertisers describe their intent conversationally, following a more universally accepted AI pattern.

A parallel design problem: boolean logic framing

V2's structured flow presents a usability issue I flagged to the team. Custom categories allow advertisers to combine targeting rules using boolean logic (targeting content matching all keywords vs. any keyword and/or blocking all keywords presented vs. targeting keywords with some exclusions). Users may end up misreading "all" as "any," conflate "block" with "exclude," or skip the choice entirely and ship campaigns with unintended targeting.

V3's chat-based experience minimizes this risk by letting users describe intent in their own words, with the LLM inferring the logic or asking clarifying questions when needed.

I'm working alongside my content writer to present clearer copy, with plain-language defaults, and inline examples to improve user comprehension of these rules, since both versions will need to communicate these concepts clearly, even if the chat-based version handles them implicitly.

The team's response

The data team and our PM advocated for the structured V2 approach with fewer degrees of freedom, more predictable outputs, and an easier validation model. Both have real merit, and the tradeoff between expressive flexibility and predictable accuracy is going to keep surfacing as AI features expand across the platform. To move this forward, I'm planning usability testing to determine whether advertisers can articulate their intent clearly enough in the business goal for the LLM to disambiguate accurately, as well as correctly infer the language we use in the boolean logic offerings.

Open questions

- How much should the LLM's reasoning be visible to the advertiser? Showing the inferred keywords increases trust but also invites micromanagement.

- Is "AI-powered" the right framing in the UI, or does it set expectations the system can't meet? Smaller, more specific framing ("suggested keywords based on your description") might be more honest.